Last week, the BSA, the trade group representing software vendors, appointed Victoria Espinel as its new President and Chief Executive.

Read more at the ITAM Review.

Last week, the BSA, the trade group representing software vendors, appointed Victoria Espinel as its new President and Chief Executive.

Read more at the ITAM Review.

This month, Gartner have released their latest MarketScope for the IT Asset Management repository.

For those of us involved in manufacturing ITAM tools, the MarketScope report is important. It reflects the voice of our customers. It has a very wide audience in the industry. Perhaps most importantly, it shows that standing still is not an option. Over the last three reports, published in 2010, 2011, and 2013, several big-name vendors have seen their ratings fall. The bar is set higher every year, and rightly so.

IT Asset Management has been undergoing an important and inexorable change over recent years. Having often been unfairly pigeon-holed as custodians of an merely financial or administrative function, smart IT Asset Managers now find themselves in an increasingly vital position in the evolving IT organization. The image of the “custodian of spreadsheets” is crumbling. Gartner Analyst Patricia Adams’s introduction to this new MarketScope report gets straight to the point:

The penetration of new computing paradigms, such as bring your own device (BYOD), software as a service (SaaS), infrastructure as a service (IaaS) and platform as a service (PaaS), into organizations, is forcing the ITAM discipline to adopt a proactive position.

Last year, I was fortunate to have the chance to speak at the Annual Conference and Exhibition of IAITAM, in California. It’s an organization I really enjoy working and interacting with, because the people involved are genuine practitioners: thoughtful, intelligent, and doing this job on a day to day basis. I’d just finished my introduction when somebody put their hand up.

“Before we start, please could you tell us what you mean when you say ‘IT Asset'”?

It’s a great question; in fact it’s absolutely fundamental. And different people will give you different answers. It took the ITIL framework – influencer of so much IT management thinking – more than a decade to acknowledge that IT Assets were anything other than simple items needing basic financial management. My answer to this question was much more in line with ITIL’s evolved definition of the Service Asset): It’s might include any of the components that underpin the IT organization, whether they’re physical, virtual, software, services, capabilies, contracts, documents, and numerous other items. IT Assets are the vital pieces of IT services.

If it costs money to own, use or maintain; if it could cause a risk or liability; if it’s supported by someone; if we need to know what it’s doing and for whom; if customers would quickly notice if it’s gone… then it’s of significant importance to the IT Asset Manager. Why? Because it’s probably of significant importance to the executives who depend increasingly on the IT Asset Manager.

One simple example of evolved IT Asset Management: A commercial application might be hosted by a 3rd party, running in a data centre you’ll never see, on virtual instances moving transparently from device to device supported by a reseller, but if you can’t show a software auditor that you have the right to run it in the way that you are running it, the financial consequences can be huge. To provide that insight, the Asset Manager will need to work with numerous pieces of data, from a diverse set of sources.

The role of Asset Management in the new, service-oriented IT organization, is summed up by Martin Thompson in the influential ITAM Review:

“ITAM should be a proactive function – not just clearing up the mess and ensuring compliance but providing a dashboard of the costs and value of services so the business can change accordingly.”

Asset Managers are needing to redefine their roles, and we need to ensure our products grow with them. We need to continue to provide ways to manage mobility, and cloud, and multi-sourcing, and all of the other emerging building blocks IT organizations. Our tools must integrate widely, gather information from an increasing range of sources, support automated and manual processes, and provide effective feedback, insight and control. Our goal must be continually to enable the Asset Manager to be a vital and trusted source of control and information.

Gartner’s expectations are shaped by the customers, practitioners and executives with whom they speak. In their words, the old image of an ITAM tool as a simple repository of data is evolving “… from “What do I have?” to “What insight can ITAM provide to improve IT business decisions?”.

I’m very proud that we have held our “Positive” rating over the last three MarketScope reports in this area. Gartner’s message ITAM vendors is clear: You have to keep moving and evolving. The bar will continue to rise.

Image courtesy of Sebastian Mary on Flickr, used with thanks under Creative Commons licensing.

In a previous post, we discussed the fact that IT Asset Management is underappreciated by the organizations which depend on it.

That article discussed a framework through which we can measure our performance within ITAM, and build a structured and well-argued case for more investment into the function. I’ve been lucky enough to meet some of the best IT Asset Management professionals in the business, and have always been inspired by their stories of opportunities found, disasters averted, and millions saved. ITAM, done properly, is never just a cataloging exercise.

As the evolution of corporate IT continues at a rapid pace, there is a huge opportunity (and a need) for Asset Management to become a critical part of the management of that change. The role of IT is changing fundamentally: Historically, most IT departments were the primary (or sole) provider of IT to their organizations. Recent years have seen a seismic shift, leaving IT as the broker of a range of services underpinned both by internal resources and external suppliers. As the role of the public cloud expands, this trend will only accelerate.

Here are four ways in which the IT Asset Manager can ensure that their function is right at the heart of the next few years’ evolution and transition in IT:

1: Ensure that ITIL v3’s “Service Asset and Configuration Management” concept becomes a reality

IT Asset Management and IT Service Management have often, if not always, existed with a degree of separation. In Martin Thompson’s survey for the ITAM Review, in late 2011, over half of the respondents reported that ITSM and ITAM existed as completely separate entities.

Despite its huge adoption in IT, previous incarnations of the IT Infrastructure Library (ITIL) framework did not significantly detail IT Asset Management as many practitioners understand it. Indeed, the ITIL version 2 definition of an Asset was somewhat unhelpful:

“Asset”, according to ITIL v2:

“Literally a valuable person or thing that is ‘owned’, assets will often appear on a balance sheet as items to be set against an organization’s liabilities. In IT Service Continuity and in Security Audit and Management, an asset is thought of as an item against which threats and vulnerabilities are identified and calculated in order to carry out a risk assessment. In this sense, it is the asset’s importance in underpinning services that matters rather than its cost”

This narrow definition needs to be read in the context of ITIL v2’s wider focus on the CMDB and Configuration Items, of course, but it still arguably didn’t capture what Asset Managers all over the world were doing for their employers: managing the IT infrastructure supply chain and lifecycle, and understanding the costs, liabilities and risks associated with its ownership.

ITIL version 3 completely rewrites this definition, and goes broad. Very broad:

“Service Asset”, according to ITIL v3: Any Capability or Resource of a Service Provider. Resource (ITILv3): [Service Strategy] A generic term that includes IT Infrastructure, people, money or anything else that might help to deliver an IT Service. Resources are considered to be Assets of an Organization Capability (ITIL v3): [Service Strategy] The ability of an Organization, person, Process, Application, Configuration Item or IT Service to carry out an Activity. Capabilities are intangible Assets of an Organization.”

This is really important. IT Asset Management has a huge role to play in enabling the organization to understand the key components of the services it is providing. The building blocks of those services will not just be traditional physical infrastructure, but will be a combination of physical, logical and virtual nodes, some owned internally, some leased, some supplied by external providers, and so forth.

In many cases, it will be possible to choose from a range of such options, and a range of suppliers, to fulfill any given task. Each option will still bear costs, whether up-front, ongoing, or both. There may be a financial contract management context, and potentially software licenses to manage. Support and maintenance details, both internal and external, need captured.

In short, it’s all still Asset management, but the IT Asset Manager needs to show the organization that the concept of IT Assets wraps up much more than just pieces of tin.

2: Learn about the core technologies in use in the organization, and way they are evolving:

A good IT Asset Manager needs to have a working understanding of the IT infrastructure on which their organization depends, and, importantly, the key trends changing it. It is useful to monitor information sources such as Gartner’s Top 10 Strategic Technology Trends, and to consider how each major technology shift will impact the IT resources being managed by the Asset Manager. For example:

Big Data will change the nature of storage hardware and services. Estimates of the annual growth rate of stored data in the corporate datacenter typically range from 40% to over 70%. With this level of rapid data expansion, technologies will evolve rapidly to cope. Large monolithic data warehouses are likely to be replaced by multiple systems, linked together with smart control systems and metadata.

Servers are evolving rapidly in a number of different ways. Dedicated appliance servers, often installed in a complete unit by application service providers, are simple to deploy but may bring new operating systems, software and hardware types into the corporate environment for the first time. With an increasing focus on energy costs, many tasks will be fulfilled by much smaller server technology, using lower powered processors such as ARM cores to deliver perhaps hundreds of servers on a single blade.

Software Controlled Networks will do for network infrastructure changes what virtualization has done for servers: they’ll be faster, simpler, and propagated across multiple tiers of infrastructure in single operations. Simply: the network assets underpinning your key services might not be doing the same thing in an hour’s time.

“The Internet of Things” refers to the rapid growth in IP enabled smart devices.

Gartner now state that over 50% of internet connections are “things” rather than traditional computers. Their analysis continues by predicting that in more than 70% of organizations, a single executive will have management oversight over all internet connected devices. That executive, of course, will usually be the CIO. Those devices? They could be almost anything. From an Asset Management point of view, this could mean anything from managing the support contracts on IP-enabled parking meters to monitoring the Oracle licensing implications of forklift trucks (this is a real example, found in their increasingly labyrinthine Software Investment Guide). IT Asset Management’s scope will go well beyond what many might consider to be IT.

3: Be highly cross functional to find opportunities where others haven’t spotted them

The Asset Manager can’t expect to be an expert in every developing line of data center technology, and every new cloud storage offering. However, by working with each expert team to understand their objectives, strategies, and roadmaps, they can be at the center of an internal network that enables them to find great opportunities.

A real life example is a British medical research charity, working at the frontline of research into disease prevention. The core scientific work they do is right at the cutting edge of big data, and their particular requirements in this regard lead them to some of the largest, fastest and most innovative on-premise data storage and retrieval technologies (Cloud storage is not currently viable for this: “The problem we’d have for active data is the access speed – a single genome is 100Gb – imagine downloading that from Google”).

These core systems are scalable to a point, but they still inevitably reach an end-of-life state. In the case of this research organization, periodic renewals are a standard part of the supply agreement. As their data centre manager told me:

“What they do is sell you a bit of kit that’ll fit your needs, front-loaded with three years support costs. After the three years, they re-look at your data needs and suggest a bigger system. Three years on, you’re invariably needing bigger, better, faster“

With the last major refresh of the equipment, a clever decision was made: instead of simply disposing of, or selling, the now redundant storage equipment, the charity has been able to re-use it internally:

“We use the old one for second tier data: desktop backups, old data, etc. We got third-party hardware-only support for our old equipment”.

This is a great example of joined-up IT Asset Management. The equipment has already largely been depreciated. The expensive three year, up-front (and hence capital-cost) support has expired, but the equipment can be stood up for less critical applications using much cheaper third party support. It’s more than enough of a solution for the next few years’ requirements for another team in the organization, so an additional purchase for backup storage has been avoided.

4: Become the trusted advisor to IT’s Financial Controller

The IT Asset Manager is uniquely positioned to be able to observe, oversee, manage and influence the make up of the next generation, hybrid IT service environment. This should place them right at the heart of the decision support process. The story above is just one example of the way this cross-functional, educated view of the IT environment enables the Asset Manager to help the organization to optimize its assets and reduce unnecessary spend.

This unique oversight is a huge potential asset to the CFO. The Asset Manager should get closely acquainted with the organization’s financial objectives and strategy. Is there an increased drive away from capital spend, and towards subscription based services? How much is it costing to buy, lease, support, and dispose of IT equipment? What is the organization’s spend on software licensing, and how much would it cost to use the same licensing paradigms if the base infrastructure changes to a newer technology, or to a cloud solution.

A key role for the Asset Manager role in this shifting environment is that of decision support. A broad and informed oversight over the structure of IT services and the financial frameworks in place around them, together with proactive analysis of the impact of planned, anticipated or proposed changes, should enable the Asset Manager to become one of the key sources of information to executive management as they steer the IT organization forwards.

Parking meter photo courtesy of SJSharkTank on Flickr, used under Creative Commons license

Analysis of the advantages and disadvantages of BYOD has filled countless blogs, articles and reports, but has generally missed the point.

Commentators have sought to answer two questions. Firstly, if we allow our employees to use their own devices, will it save us money? Secondly, will it make them more productive?

The answer to the first question was widely assumed, early on, to be yes. An early adopter in the US government sector was the State of Delaware, who initiated a pilot in early 2011. With their Blackberry Enterprise Server reaching end-of-life, the program aimed to replace it altogether, getting all users off the infrastructure by mid-2013, and replacing it with monthly payments to users to cover the costs of working on their own cellular plans:

The State agreed to reimburse a flat amount for an employee using their personal device or cell phone for state business. It was expected that by taking this action the State could stand to save $2.5 million or approximately half of the current wireless expenditure.

The State evaluated the cost of supplying its own Blackberry devices at $80 per month, per user. The highest rate paid to employees using their own devices (for voice and data) is $40 per month.

At face value, this looks like a big saving, but many commentators – and practitioners – don’t see it as typical. One of the most prominent naysayers in this regard has been the Aberdeen Group. In February 2012, Aberdeen published a widely-discussed report which suggested that the overall cost of a BYOD program would actually be notably higher than a traditional, centralized, company-issued smartphone program:

The incremental and difficult-to-track BYOD costs include: the disagregation of carrier billing; an increase in the number of expense reports filed for employee reimbursement; added burden on IT to manage and secure corporate data on employee devices; increased workload on other operational groups not normally tasked with mobility support; and the increased complexity of the resulting mobile landscape resulting in rising support costs.

http://blogs.aberdeen.com/communications/byod-hidden-costs-unseen-value/

Aberdeen reported the average monthly reimbursement paid to BYOD users as $70, higher than the State of Delaware’s $40. And reimbursement is an important term here: to avoid the payments being treated as a “benefit-in-kind”, employees had to submit expense reports showing proof of already-paid mobile bills.

The State had to ensure that it was not providing a stipend, but in fact a reimbursement after the fact… This avoids the issue associated with stipends being taxable under the IRS regulations.

As Aberdeen pointed out, there is a cost to processing those expense reports. They reckon the typical cost of this to be $29. Even with the State’s $40 reimbursement level, that factor alone would wipe out most of the difference in cost compared to that $80 monthly cost of a State-issued Blackberry, and that is before other costs such as Mobile Device Management are accounted for (another US Government pilot, at the Equal Employment Opportunities Commission, reported $10 per month, per device, for their cloud-based MDM solution). Assuming this document is genuine, it’s clearly an important marketing message for Blackberry.

Of course, there are probably ways to trim many of these costs, and perhaps a reasonable assessment would be that many organizations will be able to find benefits, but others may find it difficult.

So if the financial case is not a slam-dunk, then BYOD needs to be justified with productivity gains. And this is a big challenge: how do we find quantifiable benefits from a policy of allowing users to work with their own gadgets?

The analysis in this regard has been a mixed bag. The conclusions have often been ranged from subjective to faintly baffling (such as the argument that BYOD will be a “productivity killer” because employees will no longer log in and work during their international vacations. Er… perhaps it’s just me, but if I were a shareholder of an organization that felt that productivity depended on employees working from the beach, I’d be pretty concerned).

One of the best pieces of analysis to date has been Forrester’s report, commissioned by Trend Micro, entitled “Key Strategies to Capture and Measure the Value Of Consumerization of IT”:

More than 80% of surveyed enterprises stated that worker productivity increased due to BYOD programs. These productivity benefits are achieved as employees use their mobile devices to communicate with other workers more frequently, from any location, at any time of the day. In addition, nearly 70% of firms increased their bottom line revenues as a result of deploying BYOD programs.

A nice positive message for BYOD there, but there’s arguably a bit of a leap to the conclusion about bottom-line revenue increase. It’s not particularly clear from the report how these gains have resulted directly from a BYOD program. A critic might be justified in asking how believable this conclusion is.

However, when we look at how people use their personal devices through their day, surely it’s perfectly credible to associate productivity and revenue increases to their use of consumer technology at work? Even before the working day has started, if an employee has got to their desk on time, there’s a pretty strong chance this was assisted by their smartphone. The methods, and the applications of choice, will vary from person to person: perhaps they are using satellite road navigation to avoid delays, or smoothly linking public transport options using online timetables, or avoiding queues using electronic ticketing. On top of that, if they’re on the train, they’re probably online, which can mean networking and communication has been going on even before they arrive at the building.

This reduction of friction in the daily commute, as described by BMC’s Jason Frye in two blog posts here and here, is a daily reality for many employees, and it’s indicative of the wider power of harnessing users’ affinity with their own gadgets. But how can this effectively be measured? It’s difficult, because no two employees will be doing things quite the same – everybody’s journey to work is different. The probability of finding the same collection of transport applications on two employees’ smartphones is near zero, yet the benefits to each individual are obvious.

Equally, every knowledge worker’s approach to their job is different, and the selection of supporting applications and tools available through consumer devices is vast. Employees will find the best tools to help them in their day job, just as they do for their commute.

Now, perhaps we can also see some flaws in the balance-sheet analysis we’ve already discussed. As employees work better with their consumer devices, they rely less on traditional business applications. The global application store is proving to be a much better selector of the best tools than any narrow assessment process, ensuring that the best tools rise to the top. Legacy applications don’t need to be expensively replaced or upgraded in the consumer world: they die out of their own accord and are easily replaced. BYOD, done well, should reduce the cost of providing software, as well as hardware.

Some commentators cite incompatibility between different applications as a potential hindrance to overall productivity, but this misses the point that the consumer ecosystem is proving much better at sharing and collaboration than the business software industry has been. Users expect their content to be able to work with other users’ applications of choice, and providers that miss this point see their products quickly abandoned (imagine how short-lived a blogging tool would be if it dropped support for RSS).

The lesson for business? Trust your employees to find the best tools for themselves. Don’t rely on over-rigid productivity studies that miss the big picture. Don’t over-prescribe; concentrate on the important things: device and data security, and the provision of effective sharing and collaboration tools that join the dots. And ask yourself whether that expense report really needs to cost $29 to process through traditional business systems and processes, when your employees are so seamlessly enabled by their smartphones…

Image courtesy of Blakespot on Flickr, used under Creative Commons license.

A few days ago, Microsoft (or rather, many of its resellers) announced a 15% price rise for it’s user-based Client Access license, for a range of applications. The price hike was pretty much immediate, taking effect from 1st December 2012.

The change affects a comprehensive list of applications, so it’s likely that most organizations will be affected (although there are some exceptions, such as the PSA12 agreement in the UK public sector).

Under Microsoft’s client/server licensing system, Client Access Licenses (CALs) are required for every user or device accessing a server.

Customers using these models need to purchase these licenses in addition to the server application licenses themselves (and in fact, some analysts claim that CALs provide up to 80% of license revenue derived from these models).

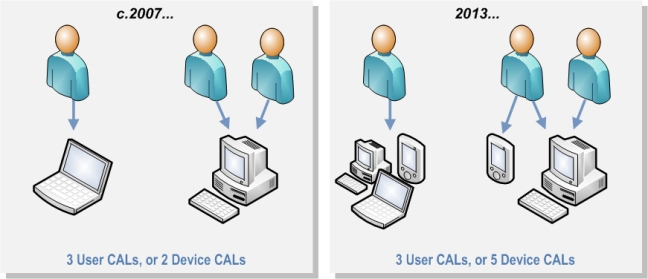

What’s interesting is that the price rise only affects User-based CALs, not Device-based CALs. Prior to this change, the price of each CAL was typically the same for any given application/option, regardless of type.

This is likely to be a response to a significant industry shift towards user-based licensing, driven to a large extent by the rise of “Bring your own Device” (BYOD). As employees use more and more devices to connect to server-based applications, the Device CAL becomes less and less attractive.

As a result, many customers are shifting to user-based licensing, and with good reason.

15% is a big rise to swallow. However, CAL licensing has often been pretty inefficient. With the benefit-of-proof firmly on the customer, a true-up or audit often results in “precautionary spending”: “You’re not sure how many of our 5,000 users will be using this system, so we’d suggest just buying 5,000 CALs“. This may be compounded by ineffective use of the different licensing options available.

Here are three questions that every Microsoft customer affected by this change should be asking:

Do we know how many of our users actually use the software?

This is the most important question of all. It’s very easy to over-purchase CALs, particularly if you don’t have good data on actual usage. But if you can credibly show that 20% of that user base is not using the software, that could be a huge saving.Could we save money by using both CAL types?

Microsoft and their resellers typically recommend that companies stick to one type of CAL or the other, for each application. But this is normally based on ease of management, not a specific prohibition of this approach.

But what if our sales force uses lots of mobile devices and laptops, while our warehouse staff only access a small number of shared PCs. It is likely to be far more cost effective to purchase user CALs for the former group, while licensing the shared PCs with device licenses. The saving may make the additional management overhead very worthwhile.Do we have a lot of access by non-employee third parties such as contractors?

If so, look into the option of purchasing an External Connector license for the application, rather than individual CALs for those users or their devices. External Connectors are typically a fixed price option, rather than a per-user CAL, so understand the breakpoints at which they become cost effective. The exercise is described at the Emma Explains Microsoft Licensing in Depth blog. Microsoft’s explanation of this license type is here.

The good news is that the price hike will usually kick in at most customer’s next renewal. If you have a current volume licensing agreement, the previous prices should still apply until then.

This gives most Software Asset Managers a bit of time to do some thinking. If you can arm your company with the answer to the above questions by the time your next renewal comes around, you could potentially save a significant sum of money, and put a big dent in that unwelcome 15% price hike.

Image courtesy of Howard Lake on Flickr, used under Creative Commons licensing

This is a long article, but I hope it is an important one. I think IT Asset Management has an image problem, and it’s one that we need to address.

I want to start with a quick story:

Representing BMC software, I recently had the privilege of speaking at the Annual Conference and Exhibition of the International Association of IT Asset Managers (IAITAM). I was curious about how well attended my presentation would be. It was up against seven other simultaneous tracks, and the presentation wasn’t about the latest new-fangled technology or hot industry trend. In fact, I was concerned that it might seem a bit dry, even though I felt pretty passionate that it was a message worth presenting.

It turned out that my worries were completely unfounded. “Benchmarking ITAM; Understand and grow your organization’s Asset Management maturity” filled the room on day 1, and earned a repeat show on day 2. That was nice after such a long flight. It proved to be as important to the audience as I hoped it would be.

I was even more confident that I’d picked the right topic when I’d finished my introduction and my obligatory joke about the weather (I’m British, it was hot, it’s the rules), I asked the first few questions of my audience:

“How many of you are involved in hands-on IT Asset Management?”

Of the fifty or so people present, about 48 hands went up.

“And how many of you feel that if your companies invested more in your function, you could really repay that strongly?”

There were still at least 46 hands in the air.

IT Asset Management is in an interesting position right now. Gartner’s 2012 Hype Cycle for IT Operations Management placed it at the bottom of the “Trough of Disillusionment”… that deep low point where the hype and expectations have faded. Looking on the bright side, the only way is up from here.

It’s all a bit strange, because there is a massive role for ITAM right now. Software auditors keep on auditing. Departments keep buying on their own credit cards. Even as we move to a more virtualized, cloud-driven world, there are still flashing boxes to maintain and patch, as well as a host of virtual IT assets which still cost us money to support and license. We need to address BYOD and mobile device management. Cloud doesn’t remove the role of ITAM, it intensifies it.

There are probably many reasons for this image problem, but I want to present an idea that I hope will help us to fix it.

One of the massive drivers of the ITSM market as a whole has been the development of a recognized framework of processes, objectives, and – to an extent – standards. The IT Infrastructure Library, or ITIL, a huge success story for the UK’s Office of Government Commerce since its creation in the 1980s.

ITIL gave ITSM a means to define and shape itself, perfectly judging the tipping point between not-enough-substance and too-much-detail.

Many people, however, contend that ITIL never quite got Asset Management. As a discipline, ITAM evolved in different markets at different times, often driven by local policies such as taxation on IT equipment. Some vendors such as France’s Staff&Line go right back to the 1980s. ITIL’s focus on the Configuration Management Database (CMDB) worked for some organizations, but was irrelevant to many people focused solely on the business of managing IT assets in their own right. ITIL v3’s Service Asset Management is arguably something of an end-around.

However, ITIL came with a whole set of tools, practices and service providers that helped organizations to understand where they currently sat on an ITSM maturity curve, and where they could be. ITIL has an ecosystem – and it’s a really big one.

Time for another story…

In my first role as an IT professional, back in 1997, I worked for a company whose IT department boldly drove a multi-year transformation around ITIL. Each year auditors spoke with ITIL process owners, prodded and poked around the toolsets (this was my part of the story), and rated our progress in each of the ITIL disciplines.

Each year we could demonstrate our progress in Change Management, or Capacity Management, or Configuration Management, or any of the other ITIL disciplines. It told us where we were succeeding and where we needed to pick up. And because this was based on a commonly understood framework, we could also benchmark against other companies and organizations. As the transformation progressed, we started setting highest benchmark scores in the business. That felt good, and it showed our company what they were getting for their investment.

But at the same time, there was a successful little team, also working with our custom Remedy apps, who were automating the process of asset request, approval and fulfillment. Sadly, they didn’t really figure in the ITIL assessments, because, well, there was no “Asset Management” discipline defined in ITIL version 2. We all knew how good they were, but the wider audience didn’t hear about them.

Even today, we don’t have a benchmarking structure for IT Asset Management that is widely shared across the industry. There are examples of proprietary frameworks like Microsoft’s SAM Optimization Model, but it seems to me that there is no specific open “ITIL for ITAM”.

This is a real shame, because Benchmarking could be a really strong tool for the IT Asset Manager to win backing from their business. There are many reasons why:

Those two points alone start to show us what a good tool it is for building a case for investment. Furthermore:

And then, provided we work to a common framework…

At the IAITAM conference, and every time I’ve raised this topic with customers since, there has been a really positive response. There seems to be a real hunger for a straightforward and consistent way of ranking ITAM maturity, and using it to reinforce our business cases.

For our presentation at IAITAM, we wanted to have a starting point, so we built one, using some simple benchmarking principles.

First, we came up with a simple scoring system. “1 to 4” or “1 to 5”, it doesn’t really matter, but we went for the former. Next, we identified what an organization might look like, at a broad ITAM level, at each score. That’s pretty straightforward too:

Asset Maturity – General Scoring Guidelines

In other words, Level 4 would be off-the-chart, industry leading good. Level 1 would be head-in-the-sand barely started. Next, we need to tackle that breadth. Asset, as we’ve said, is a broad subject. Software and hardware, datacenter and desktop, etc…

We did this by specifying two broad areas of measurement scope:

Each of these areas can now be divided into sub-categories. For example, on “Coverage” we can now describe in a bit more detail how we’d expect an organization at each level to look:

“Asset Coverage” Scoring Levels

This process repeats for each measurement area. Once each is defined, the method of application is up to the user (for example, separate assessments might be appropriate for datacenter assets and laptops/desktops, perhaps with different ranking/weighting for each).

You can see our initial, work-in-progress take on this at our Communities website at BMC, without needing to log in: https://communities.bmc.com/communities/people/JonHall/blog/2012/10/17/asset-management-benchmarking-worksheet. We feel that this resource is strongest as a community resource. If it helps IT Asset managers to build a strong case for investment, then it helps the ITAM sector.

Does this look like something that would be useful to you as an IT Asset Manager, and if so, would you like to be part of the community that builds it out?

Photo from the IntelFreePress Flickr feed and used here under Creative Commons Licensing without implying any endorsement by its creator.