I presented an Asset Management breakout session at the BMC Engage conference in Las Vegas today. The slides are here:

An interesting question came up at the end: What percentage accuracy is good enough, in an IT Asset Management system? It’s a question that might get many different answers. Context is important: you might expect a much higher percentage (maybe 98%?) in a datacentre, but it’s not so realistic to achieve that for client devices which are less governable… and more likely to be locked away in forgotten drawers.

However, I think any percentage figure is pretty meaningless without another important detail: a good understanding of what you don’t know. Understanding what makes up the percentage of things that you don’t have accurate data on is arguably just as important as achieving a good positive score.

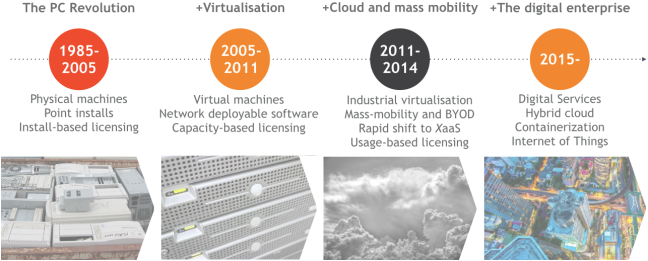

One of the key points of my presentation is that there has been a rapid broadening of the entities that might be defined as an IT Asset:

The digital services of today and the future will likely be underpinned by a broader range of Asset types than ever. A single service, when triggered, may touch everything from a 30-year-old mainframe to a seconds-old Docker instance. Any or all of those underpinning components may be of importance to the IT Asset Manager. After all, they cost money. They may trigger licensing requirements. They need to be supported. The Service Desk may need to log tickets against them.

The trouble is, not all of the new devices can be identified, discovered and managed in the same way as the old ones. The “discover and reconcile” approach to Asset data maintenance still works for many Asset types, but we may need a completely different approach for new Asset classes like SaaS services, or volatile container instances.

The IT Asset Manager may not be able to solve all those problems. They may not even be in a position to have visibility, particularly if IT has lots its overarching governance role over what Assets come into use in the organization (SkyHigh Networks most recent Cloud Adoption and Risk Report puts the average number of Cloud Services in use in an enterprise at almost 1100. Does anyone think IT has oversight over all of those, anywhere?).

However, it’s still important to understand and communicate those limitations. With CIOs increasingly focused on ITAM-dependent data such as the overall cost of running a digital service, any blind spots should be identified, understood, and communicated. It’s professional, it’s helpful, it enables a case to be made for corrective action, and it avoids something that senior IT executives hate: surprises.

Question mark image courtesy Cesar Bojorquez on Flickr. Used under Creative Commons licensing.